D Computer practical 4 - Analysis for Binary and Survival data

R practicalities - click here

There are many, many packages in R that implement methods for designing and analysing clinical trials (see a list at CRAN task view). We will look at some of these, and will also write our own code for some tasks. Remember that to install a package, you can do

If you have problems running R on your laptop, or on the university machines, the most foolproof way might be to use Github codespaces (thanks to Louis Aslett, who developed this). You may already be familiar with this from previous modules.

An advantage of this is that you can open the same codespace (the same instance of R) from any computer, so if you plan to work on things (for example your summative assignment, which will involve some R) from more than one computer, this might be ideal.

Here is a guide to getting set up.

In this practical we’ll focus on the analysis of binary and survival data. We’ll make use of several new packages. Installing them all now should hopefully prevent it from disrupting your flow! We’ll load them as we go along.

Notice that where there are code snippets that you want to copy directly, you can hover your cursor in the top right of the code box and a ‘copy’ icon will appear.

install.packages(c("tidyverse", "HSAUR", "HSAUR3", "pROC", "survival", "ggsurvfit", "survminer", "car"))D.1 Binary outcome data

The dataset we’ll use to explore Binary outcome data is the respiratory dataset, from the package HSAUR. In this trial, patients across two centres were randomly assigned to receive treatment or placebo, and their respiratory status was recorded as either ‘poor’ or ‘good’. Measurements were recorded at months 0, 1, 2, 3, 4, but we will keep only month 0 (as a baseline) and month 4 (as a final outcome). We process the data below:

library(HSAUR)

data("respiratory")

# Keep only months 0 and 4

resp_04 = respiratory |>

filter(month %in% c(0,4))

## convert to wider to have one row per participant

resp_df = pivot_wider(

data = resp_04,

id_cols = c("subject", "centre", "treatment", "sex", "age"),

names_from = "month",

names_prefix = "status",

values_from = "status"

)For each patient, we have the following baseline covariates:

- sex

- age

- treatment centre (centre 1 or centre 2)

- symptom status (poor or good).

The outcome variable is whether the status of the patient’s symptoms are poor or good after four months of the trial. Note that unusually we do have the baseline observation of this quantity. Since a lot of the earlier methods we covered in Chapter 6 involve simple calculations with the data (and working with survival data is much less familiar), we will skip straight logistic regression.

D.1.1 Logistic regression

Logistic regression give us a way to account for the baseline covariates. To fit a logistic regression in R we use the function glm. This fits all kinds of generalised linear models, and so we specify that we want a logistic regression by choosing family = binomial(link='logit').

The code is included in all examples in Sections 6.3 and 6.4, so if you aren’t sure how to do something they would be a good place to look.

Exercise D.1 Fit a logistic regression model to the resp_df data set, being careful to check for any issues with the data.

Click for solution

Solution. The first model we fit involves all covariates linearly:

model1 = glm(status4 ~ centre + treatment + sex + age + status0,

family = binomial(link='logit'), data=resp_df)

summary(model1)##

## Call:

## glm(formula = status4 ~ centre + treatment + sex + age + status0,

## family = binomial(link = "logit"), data = resp_df)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) -0.83921 0.67498 -1.243 0.213757

## centre2 1.27397 0.48546 2.624 0.008684 **

## treatmenttreatment 1.08498 0.47415 2.288 0.022122 *

## sexmale 0.33480 0.60171 0.556 0.577924

## age -0.02978 0.01805 -1.650 0.099035 .

## status0good 1.72562 0.47024 3.670 0.000243 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 153.44 on 110 degrees of freedom

## Residual deviance: 119.34 on 105 degrees of freedom

## AIC: 131.34

##

## Number of Fisher Scoring iterations: 4and we can inspect the variance inflation factor to check for multicollinearity:

## centre treatment sex age status0

## 1.171418 1.120405 1.213698 1.194655 1.066666We can also try one with age squared, since the effect might not be linear (the age range is 11 to 68 which is quite a wide range). While we’re at it we’ll remove sex from the model since it appears to have no effect

model2 = glm(status4 ~ centre + treatment + age + I(age^(2)) + status0,

family = binomial(link='logit'), data=resp_df)

summary(model2)##

## Call:

## glm(formula = status4 ~ centre + treatment + age + I(age^(2)) +

## status0, family = binomial(link = "logit"), data = resp_df)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) 1.517788 1.512818 1.003 0.315723

## centre2 1.268735 0.486975 2.605 0.009178 **

## treatmenttreatment 0.996219 0.465348 2.141 0.032290 *

## age -0.173598 0.087107 -1.993 0.046271 *

## I(age^(2)) 0.002000 0.001167 1.714 0.086530 .

## status0good 1.671810 0.471304 3.547 0.000389 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 153.44 on 110 degrees of freedom

## Residual deviance: 116.44 on 105 degrees of freedom

## AIC: 128.44

##

## Number of Fisher Scoring iterations: 4This is an improvement in terms of AIC and deviance, so we’ll use this model (for now).

Exercise D.2 Perform some diagnostics on the model you fitted in Exercise D.1, focussing on the model’s ability to discriminate between outcomes, and also on how well the model calibrates to the observed data.

Click for solution

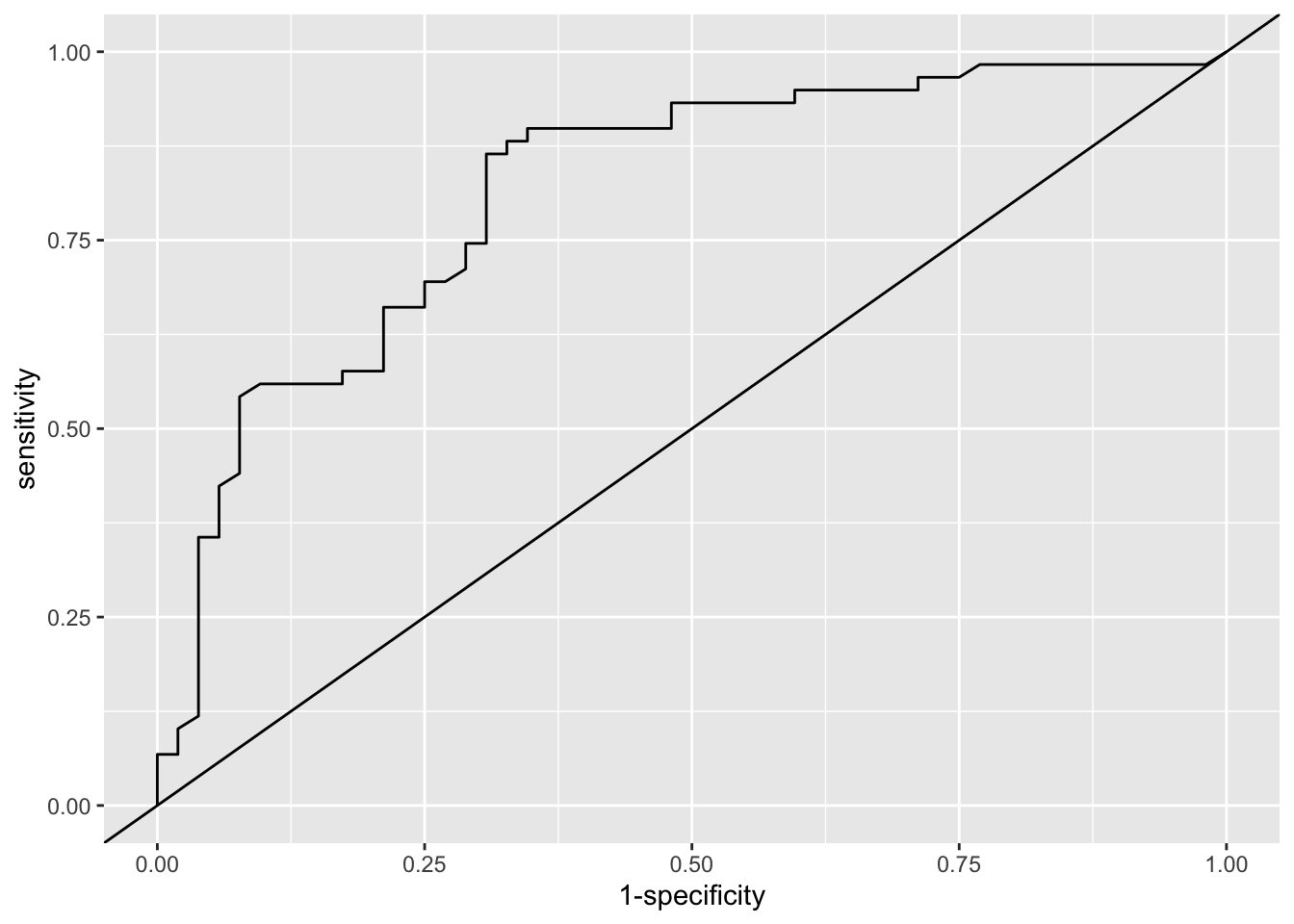

Solution. Firstly we’ll use ROC analysis to assess the model in terms of how well it discriminates the participants in terms of their respiratory status at 4 months.

library(pROC)

fit_resp = fitted(model2) # Fitted values from model3

out_resp = resp_df$status4 # outcome values (1 or 2)

roc_resp_df = data.frame(fit = fit_resp, out = out_resp)

roc_resp = roc(data=roc_resp_df, response = out, predictor=fit)

roc_resp##

## Call:

## roc.data.frame(data = roc_resp_df, response = out, predictor = fit)

##

## Data: fit in 52 controls (out poor) < 59 cases (out good).

## Area under the curve: 0.8155The area under the curve (AUC) is around 0.8, which is generally considered quite good.

We can also plot the ROC curve

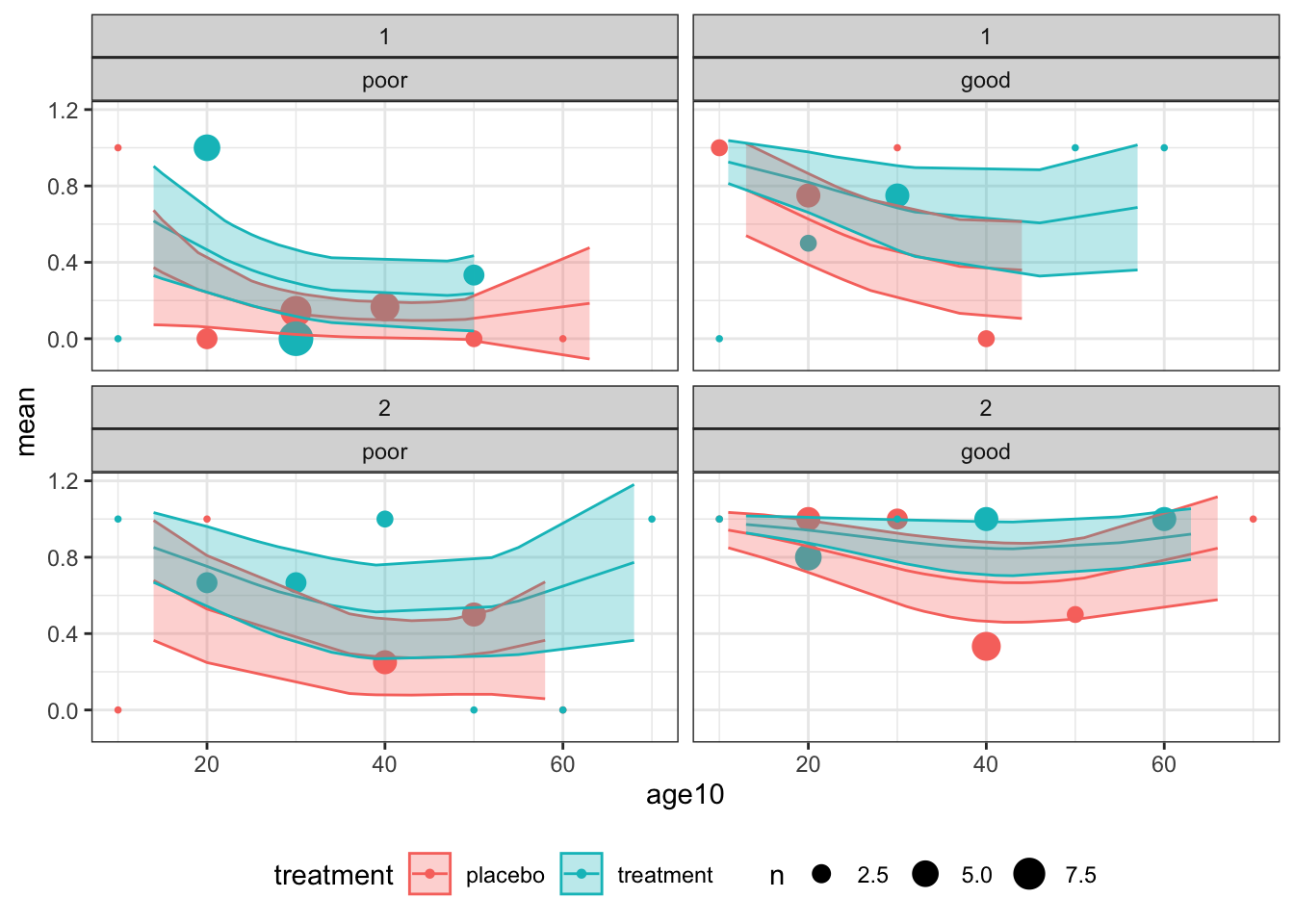

Next we’ll assess the model in terms of calibration.

## Group the observations into age groups (I've chosen 10 year bins)

resp_df$age10 = round(resp_df$age, -1)

# find mean status (minus 1 because factor levels are 1 and 2) and number of obs

# for each combination of factor/age group levels

resp_sum = resp_df %>%

group_by(age10, centre, treatment, status0) %>%

summarise(

mean = mean(as.numeric(status4))-1,

n=length(age10),

.groups="keep")

# Plot the observations, using facet_wrap to deal with two of the factors

obs_plot = ggplot(data=resp_sum, aes(x=age10, col=treatment)) +

geom_point(aes(y=mean, size=n), pch=16) +

theme_bw()+

facet_wrap(c("centre", "status0")) + theme(legend.position = "bottom")

## To include the estimate and SD from the model, we use the original dataset with continuous age,

# fit model 2 including an estimate of SE, and use geom_line and geom_ribbon to add the fitted model with 95% intervals

fit_resp = predict(model2, newdata=resp_df, se.fit=T, type="response")

resp_df$fit = fit_resp$fit

resp_df$fit_se = fit_resp$se.fit

obs_plot +

geom_line(data=resp_df, aes(x=age, y=fit)) +

geom_ribbon(

data=resp_df,

aes(x=age, ymin = fit - 1.96*fit_se, ymax = fit + 1.96*fit_se, fill = treatment),

alpha=0.3

)

Figure D.1: Observations and fitted model for combinations of centre (1: top and 2: bottom) for baseline status (poor: left and good: right)

Overall, the model does not appear to be a great fit, but there is no systematic cause for concern.

These plots also give us an idea of the model’s fit for different categories of patient. For example, the probability of good respiratory symptoms at 4 months is much higher for a patient in the treatment group who had good symptoms at month zero, especially if they are young and belong to centre 2. The model’s output is much more bleak for a patient in group \(C\) at centre 1 who had poor symptoms at month zero.

Having performed some diagnostics, we can proceed to use our model to provide information about the effect of the treatment to improve respiratory symptoms.

Exercise D.3 Compute a 95% CI for the odd ratio for this trial, using the model you built in Exercise D.1.

Click for solution

Solution. We can find the details of the coefficients via

##

## Call:

## glm(formula = status4 ~ centre + treatment + age + I(age^(2)) +

## status0, family = binomial(link = "logit"), data = resp_df)

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) 1.517788 1.512818 1.003 0.315723

## centre2 1.268735 0.486975 2.605 0.009178 **

## treatmenttreatment 0.996219 0.465348 2.141 0.032290 *

## age -0.173598 0.087107 -1.993 0.046271 *

## I(age^(2)) 0.002000 0.001167 1.714 0.086530 .

## status0good 1.671810 0.471304 3.547 0.000389 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 153.44 on 110 degrees of freedom

## Residual deviance: 116.44 on 105 degrees of freedom

## AIC: 128.44

##

## Number of Fisher Scoring iterations: 4and find that the estimate of the log OR is 0.996, with an SE of 0.465, and therefore a 95% CI for the log OR is

est_logOR = summary(model2)$coefficients[3,1]

se_logOR = summary(model2)$coefficients[3,2]

logOR_CI = c(est_logOR - qnorm(0.975)*se_logOR, est_logOR + qnorm(0.975)*se_logOR)Finally we can use this to find a CI for the OR itself

## [1] 1.087796 6.741514We call this the adjusted odds ratio, since we have adjusted for other covariates. Since this confidence interval does not contain the null value (1 for the OR), we see that the treatment effect is significant.

Note that these values are still quite difficult to interpret - this is an important issue in binary outcome RCTs in particular, as you’ll see in Formative 2.

D.2 Analysis for Survival data

The packages we need for this section are survival, ggsurvfit and survminer, so load those now.

The first dataset we will use is aml - this dataset is from a trial investigating whether the standard course of chemotheraphy should be extended for some additional cycles (‘maintenance’) for patients with Acute Myelogenous Leukemia.

Exercise D.4 Look at the help file for the aml dataset and make sure you understand what each variable is doing.

R might ask which aml dataset you want - if it does, choose the one from the survival package.

Click for solution

Solution. The data set aml has three columns

time- survival or censoring time for each patient. This is the last time at which that patient was recorded.status- censoring status. By convention, this is 1 if a death is observed (ie. for complete data) and 0 for censored data (ie. the time in thetimecolumn was the last time that patient was seen, and they were still alive)x- maintenance chemotherapy given? This is the treatment variable

The first step is to combine the first two columns into a form we can use. We do this using the Surv function in the package survival, which creates a ‘survival object’ that we can then use in various other functions. This object contains the times and the information about which observations are censored.

To create a survival object from some dataset dataframe containing a time variable and a censoring status variable, the general form is

or if you prefer,

Exercise D.5 Use the Surv function now on the aml data. The output will contain some notation you probably haven’t seen before - can you work out what it means?

Click for solution

Solution. We create the survival object for aml by

## [1] 9 13 13+ 18 23 28+ 31 34 45+ 48 161+ 5 5 8 8 12 16+ 23 27

## [20] 30 33 43 45This is a vector of the time values, in the same order as in aml. You’ll notice that some have a ‘+’ attached. This denotes the censored observations (the notation reflects the fact that the true time of death/the event will be greater than this).

D.2.1 Fitting a survival curve

The next thing we probably want to do is to estimate the survival function and plot the survival curve. The first method we’ll use is the Kaplan-Meier estimator.

D.2.1.1 Kaplan-Meier

To fit a Kaplan-Meier survival curve, we use the function survfit, which is specified using a formula, much like lm or glm. To fit a Kaplan-Meier estimate with a data frame split by treatment effect, the general form is

We can then use summary to see the intermediary calculations at each step, and plot (for base plot) or ggsurvfit (from ggplot2 and ggsurvfit) to plot the curves.

Exercise D.6 Fit a Kaplan-Meier estimator to the aml data. View the table using summary. Plot the curves.

Click for solution

Solution. To fit the Kaplan-Meier estimator we use

We can then look at the summary table and plot the data by

## Call: survfit(formula = surv_aml ~ x, data = aml)

##

## x=Maintained

## time n.risk n.event survival std.err lower 95% CI upper 95% CI

## 9 11 1 0.909 0.0867 0.7541 1.000

## 13 10 1 0.818 0.1163 0.6192 1.000

## 18 8 1 0.716 0.1397 0.4884 1.000

## 23 7 1 0.614 0.1526 0.3769 0.999

## 31 5 1 0.491 0.1642 0.2549 0.946

## 34 4 1 0.368 0.1627 0.1549 0.875

## 48 2 1 0.184 0.1535 0.0359 0.944

##

## x=Nonmaintained

## time n.risk n.event survival std.err lower 95% CI upper 95% CI

## 5 12 2 0.8333 0.1076 0.6470 1.000

## 8 10 2 0.6667 0.1361 0.4468 0.995

## 12 8 1 0.5833 0.1423 0.3616 0.941

## 23 6 1 0.4861 0.1481 0.2675 0.883

## 27 5 1 0.3889 0.1470 0.1854 0.816

## 30 4 1 0.2917 0.1387 0.1148 0.741

## 33 3 1 0.1944 0.1219 0.0569 0.664

## 43 2 1 0.0972 0.0919 0.0153 0.620

## 45 1 1 0.0000 NaN NA NA

We can see from the table that the lower curve is the non-maintained arm - there is only one survivor of this group, and the data finish at \(t=45\).

The function add_risktable() adds information about the numbers at risk and numbers of events. The function add_confidence_interval() adds a confidence interval, and we see that with the aml data the uncertainty is huge.

D.2.1.2 Fitting an exponential distribution

To fit an exponential distribution, we need to estimate \(\hat\lambda_C\) and \(\hat\lambda_T\), using

\[\hat\lambda_X = \frac{m_X}{\sum\limits_{i=1}^{n_X} t_i} = \frac{m_X}{t^+_X}, \] where \(n_X\) is the number of observations \(t_1,\ldots,t_{n_X}\) in group \(X\), of which \(m_X\) are censored.

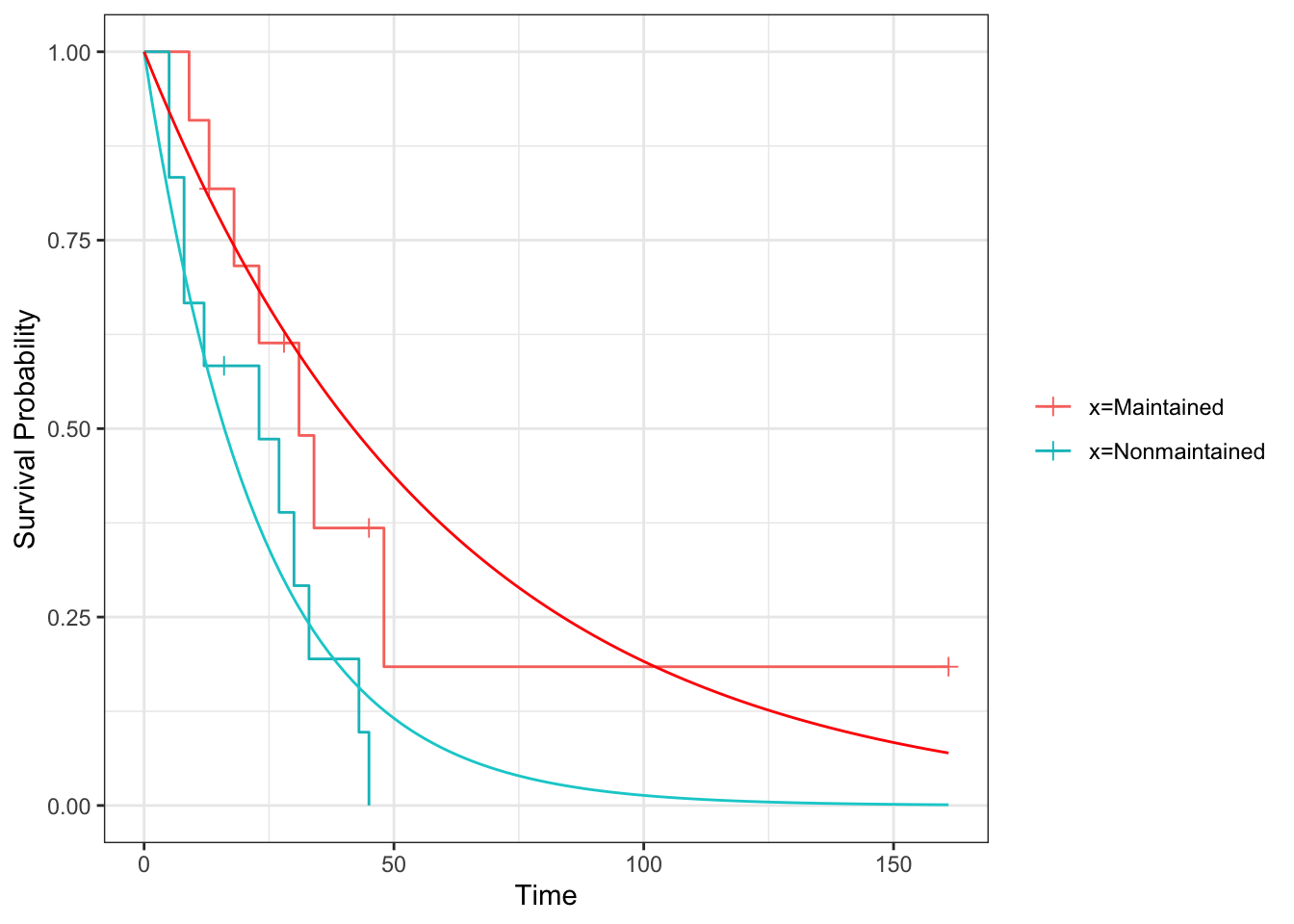

Exercise D.7 Fit an exponential distribution for each treatment group to the aml data and plot the resulting estimated survival curves, along with the Kaplan Meier estimators from Exercise D.6 (for comparison).

Click for solution

Solution. To calculate \(\hat\lambda_C\) and \(\hat\lambda_T\) we need to find \(m_C,\;\,m_T\;,t^+_C\) and \(t^+_T\).

mC_aml = sum((aml$status==1)&(aml$x=="Nonmaintained"))

mT_aml = sum((aml$status==1)&(aml$x=="Maintained"))

tsum_aml_C = sum(aml$time[aml$x=="Nonmaintained"])

tsum_aml_T = sum(aml$time[aml$x=="Maintained"])

lamhat_aml_C = mC_aml / tsum_aml_C

lamhat_aml_T = mT_aml / tsum_aml_TWe can then plot the Survival curves using geom_function

# Define survival function for exponential density

exp_st = function(t, lambda){exp(-lambda*t)}

km_aml %>% ggsurvfit() + ylim(0,1) + theme_bw() +

add_censor_mark() +

geom_function(fun=exp_st, args=list(lambda = lamhat_aml_C), col="darkturquoise") +

geom_function(fun=exp_st, args=list(lambda = lamhat_aml_T), col="red")

D.2.2 Comparing survival curves

Having found the MLEs for the aml dataset, assuming an exponential distribution, we can now immediately conduct a likelihood ratio test.

D.2.2.1 Likelihood ratio test

Recall that our test statistic (which we found in Section 8.1) is

\[ \lambda_{LR} = 2\left(m_C\log\left(\frac{m_C}{t^+_C}\right) + m_T\log\left(\frac{m_T}{t^+_T}\right) - m\log\left(\frac{m}{t^+}\right)\right),\]

which we then refer to a \(\chi^2_1\) distribution.

Exercise D.8 Conduct a likelihood ratio test for the aml data, with the null hypothesis that the survival curves are the same for both treatment groups. Before you calculate the answer, think about what you expect to see.

Click for solution

Solution. We already have the \(m_X\) and \(t^+_X\) from Exercise D.7, and we can easily find \(t^+\) and \(m\) from these.

and we can use these to compute \(\lambda_{LR}\):

LRstat_aml = 2*(mC_aml*log(mC_aml/tsum_aml_C) + mT_aml*log(mT_aml/tsum_aml_T) - m_aml*log(m_aml/tsum_aml))

LRstat_aml## [1] 4.061349Finally, we refer this to \(\chi^2_1\)

## [1] 0.04387544We find that we have just enough evidence to reject \(H_0\) at the 95% level.

D.2.2.2 Log-rank test

Because our computer practicals are early in the week we haven’t covered this material yet in lectures. So, it might be sensible to return to it at a later date, and instead to continue to the extension question if you still have time in the session.

The log-rank test is most easily found using the function survdiff. This function has an argument rho that controls the type of test. If we set rho=0 then it performs a log-rank test.

The general form is similar to survfit:

Exercise D.9 Use survdiff to do a log rank test on the aml data. Do you expect the results to be similar to your results from Exercise D.8?

Click for solution

Solution. To conduct a log rank test we use

## Call:

## survdiff(formula = surv_aml ~ x, data = aml, rho = 0)

##

## N Observed Expected (O-E)^2/E (O-E)^2/V

## x=Maintained 11 7 10.69 1.27 3.4

## x=Nonmaintained 12 11 7.31 1.86 3.4

##

## Chisq= 3.4 on 1 degrees of freedom, p= 0.07This is not that close to the result of the likelihood ratio test, probably reflecting the less-than-perfect fit of the exponential survival curve.

D.2.2.3 Cox regression

Finally, we will fit the Cox regression model. This is done using the function coxph in the package survival. Look at the help file and the examples in Section 8.3.2 to understand how to use this function.

Exercise D.10 Fit a Cox proportional hazards model to the data. Do you think this is an appropriate model to use? How influential is the treatment?

Click for solution

Solution. In the aml dataset there are no baseline covariates, so our only dependent variable is the treatment group variable x.

## Call:

## coxph(formula = Surv(time, status) ~ x, data = aml)

##

## coef exp(coef) se(coef) z p

## xNonmaintained 0.9155 2.4981 0.5119 1.788 0.0737

##

## Likelihood ratio test=3.38 on 1 df, p=0.06581

## n= 23, number of events= 18We’ve already seen that the survival curves don’t cross, so we can be reasonably comfortable fitting this model. To see how the model performs, we can use the summary function:

## Call:

## coxph(formula = Surv(time, status) ~ x, data = aml)

##

## n= 23, number of events= 18

##

## coef exp(coef) se(coef) z Pr(>|z|)

## xNonmaintained 0.9155 2.4981 0.5119 1.788 0.0737 .

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## exp(coef) exp(-coef) lower .95 upper .95

## xNonmaintained 2.498 0.4003 0.9159 6.813

##

## Concordance= 0.619 (se = 0.063 )

## Likelihood ratio test= 3.38 on 1 df, p=0.07

## Wald test = 3.2 on 1 df, p=0.07

## Score (logrank) test = 3.42 on 1 df, p=0.06To check this more carefully we can use the log-log plot

and we see that the curves are close to parallel.

Although the coefficient of x isn’t quite significant at the 0.05 level, it appears there is reasonable evidence that the treatment has an effect (though probably not enough to make a clinical decision).

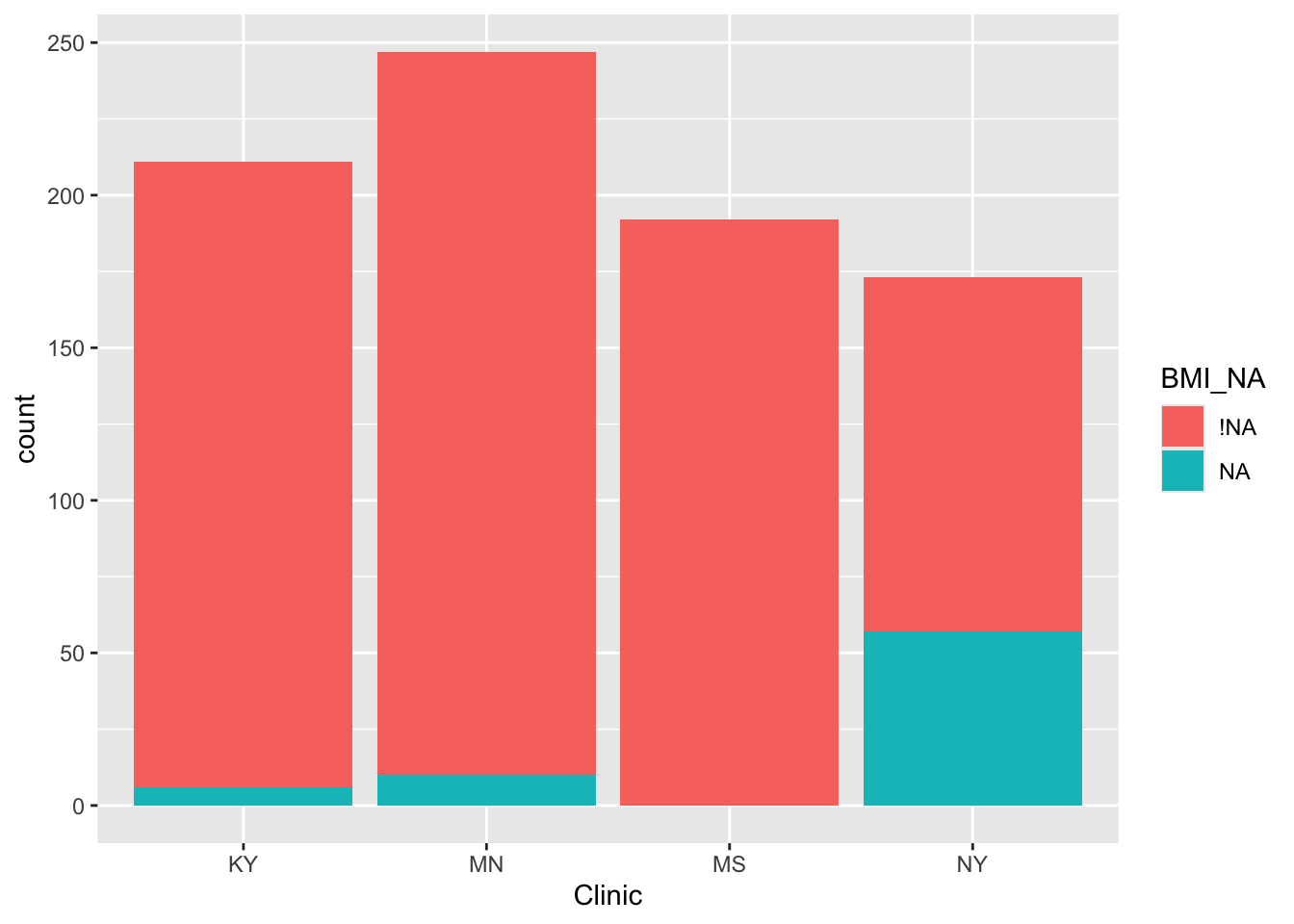

Exercise D.11 The dataset colon, also from the survival package, contains data from a trial of colon cancer patients, comparing three treatments: observation (obs), levamisole (Lev) and levamisole + 5-FU (Lev+5FU). To simplify things, we will restrict the data to those patients on Obs or Lev+5FU. The main report of this trial is Moertel et al. (1990).

colondf = colon[colon$rx!="Lev",]

colondf$rx = as.factor(as.character(colondf$rx)) # Removes Lev factor levelFor this data

- Look at the help file and make sure you understand what the columns mean

- Fit Kaplan-Meier estimators to the survival curves for the two groups.

- Perform some tests to see whether the treatment has a significant effect on the outcome. What do you find?

Click for solution

Solution. From the help file we see that rx is the treatment group, stop is the time variable, status gives the censoring status.

## 'data.frame': 1238 obs. of 16 variables:

## $ id : num 1 1 2 2 3 3 4 4 5 5 ...

## $ study : num 1 1 1 1 1 1 1 1 1 1 ...

## $ rx : Factor w/ 2 levels "Lev+5FU","Obs": 1 1 1 1 2 2 1 1 2 2 ...

## $ sex : num 1 1 1 1 0 0 0 0 1 1 ...

## $ age : num 43 43 63 63 71 71 66 66 69 69 ...

## $ obstruct: num 0 0 0 0 0 0 1 1 0 0 ...

## $ perfor : num 0 0 0 0 0 0 0 0 0 0 ...

## $ adhere : num 0 0 0 0 1 1 0 0 0 0 ...

## $ nodes : num 5 5 1 1 7 7 6 6 22 22 ...

## $ status : num 1 1 0 0 1 1 1 1 1 1 ...

## $ differ : num 2 2 2 2 2 2 2 2 2 2 ...

## $ extent : num 3 3 3 3 2 2 3 3 3 3 ...

## $ surg : num 0 0 0 0 0 0 1 1 1 1 ...

## $ node4 : num 1 1 0 0 1 1 1 1 1 1 ...

## $ time : num 1521 968 3087 3087 963 ...

## $ etype : num 2 1 2 1 2 1 2 1 2 1 ...We can therefore fit (and plot) the Kaplan-Meier estimator split by treatment group.

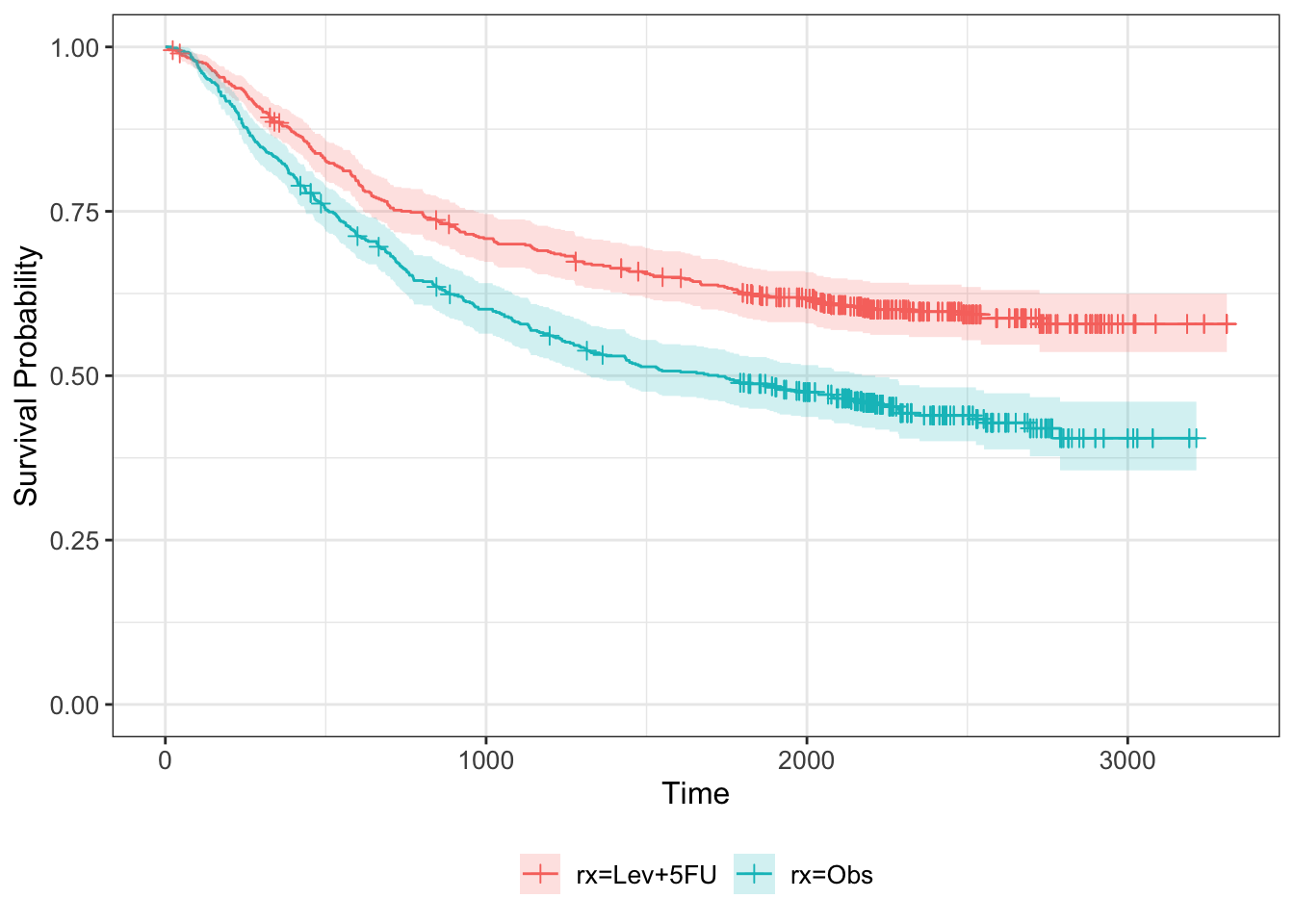

km_colon = survfit(Surv(time, status) ~ rx, data=colondf)

km_colon %>% ggsurvfit + ylim(0,1) +

add_censor_mark() +

add_confidence_interval()

Next we can find the MLE for each treatment group

mC_colon = sum((colondf$status==1)&(colondf$rx=="Obs"))

mT_colon = sum((colondf$status==1)&(colondf$rx=="Lev+5FU"))

tsum_colon_C = sum(colondf$time[colondf$rx=="Obs"])

tsum_colon_T = sum(colondf$time[colondf$rx=="Lev+5FU"])

lamhat_colon_C = mC_colon / tsum_colon_C

lamhat_colon_T = mT_colon / tsum_colon_TWe can then plot the Survival curves using geom_function

# Define survival function for exponential density

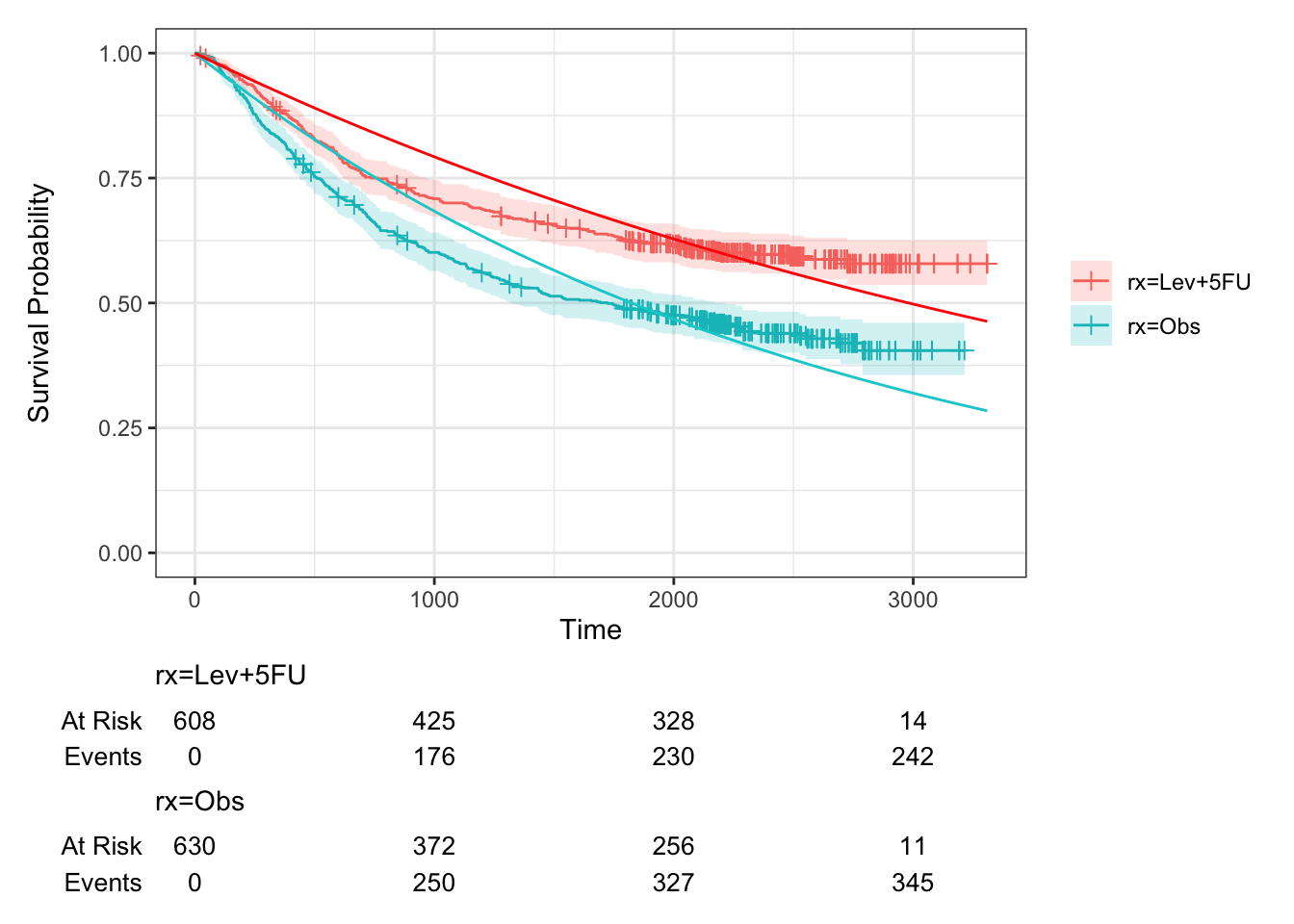

km_colon %>% ggsurvfit() +

add_censor_mark() +

add_risktable() +

add_confidence_interval() +

ylim(0,1) + theme_bw() +

geom_function(fun=exp_st, args=list(lambda = lamhat_colon_C), col="darkturquoise") +

geom_function(fun=exp_st, args=list(lambda = lamhat_colon_T), col="red")

Figure D.2: Survival curves for groups C and T in colon study, fitted assuming an exponential distribution. Kaplan-Meier estimates also shown.

We see that this fit is quite poor.

and we can use these to compute \(\lambda_{LR}\):

LRstat_colon = 2*(mC_colon*log(mC_colon/tsum_colon_C) + mT_colon*log(mT_colon/tsum_colon_T) - m_colon*log(m_colon/tsum_colon))

LRstat_colon## [1] 35.0111Finally, we refer this to \(\chi^2_1\)

## [1] 3.278309e-09Highly significant, but because of the very poor fit in Figure D.2 not especially trustworthy.

Next, we can perform a log-rank test:

## Call:

## survdiff(formula = Surv(time, status) ~ rx, data = colondf, rho = 0)

##

## N Observed Expected (O-E)^2/E (O-E)^2/V

## rx=Lev+5FU 608 242 306 13.5 28.2

## rx=Obs 630 345 281 14.7 28.2

##

## Chisq= 28.2 on 1 degrees of freedom, p= 1e-07We see that the test statistic, although still very significant, is much lower than for the likelihood ratio test.

Finally, we fit a Cox regression model. In the first instance we can do this with just the treatment group as a covariate:

## Call:

## coxph(formula = Surv(time, status) ~ rx, data = colondf)

##

## coef exp(coef) se(coef) z p

## rxObs 0.44213 1.55602 0.08394 5.267 1.38e-07

##

## Likelihood ratio test=28.25 on 1 df, p=1.068e-07

## n= 1238, number of events= 587But we can also include other baseline covariates

## Call:

## coxph(formula = Surv(time, status) ~ rx + sex + age + obstruct +

## nodes, data = colondf)

##

## coef exp(coef) se(coef) z p

## rxObs 0.470039 1.600056 0.085522 5.496 3.88e-08

## sex -0.155126 0.856308 0.083835 -1.850 0.0643

## age -0.002940 0.997064 0.003400 -0.865 0.3872

## obstruct 0.072027 1.074685 0.105813 0.681 0.4961

## nodes 0.104607 1.110274 0.008493 12.317 < 2e-16

##

## Likelihood ratio test=146 on 5 df, p=< 2.2e-16

## n= 1214, number of events= 574

## (24 observations deleted due to missingness)and we see that as well as the treatment arm, the number of lymph nodes with detectable cancer (given by nodes) is highly significant, and sex is also close to significant.

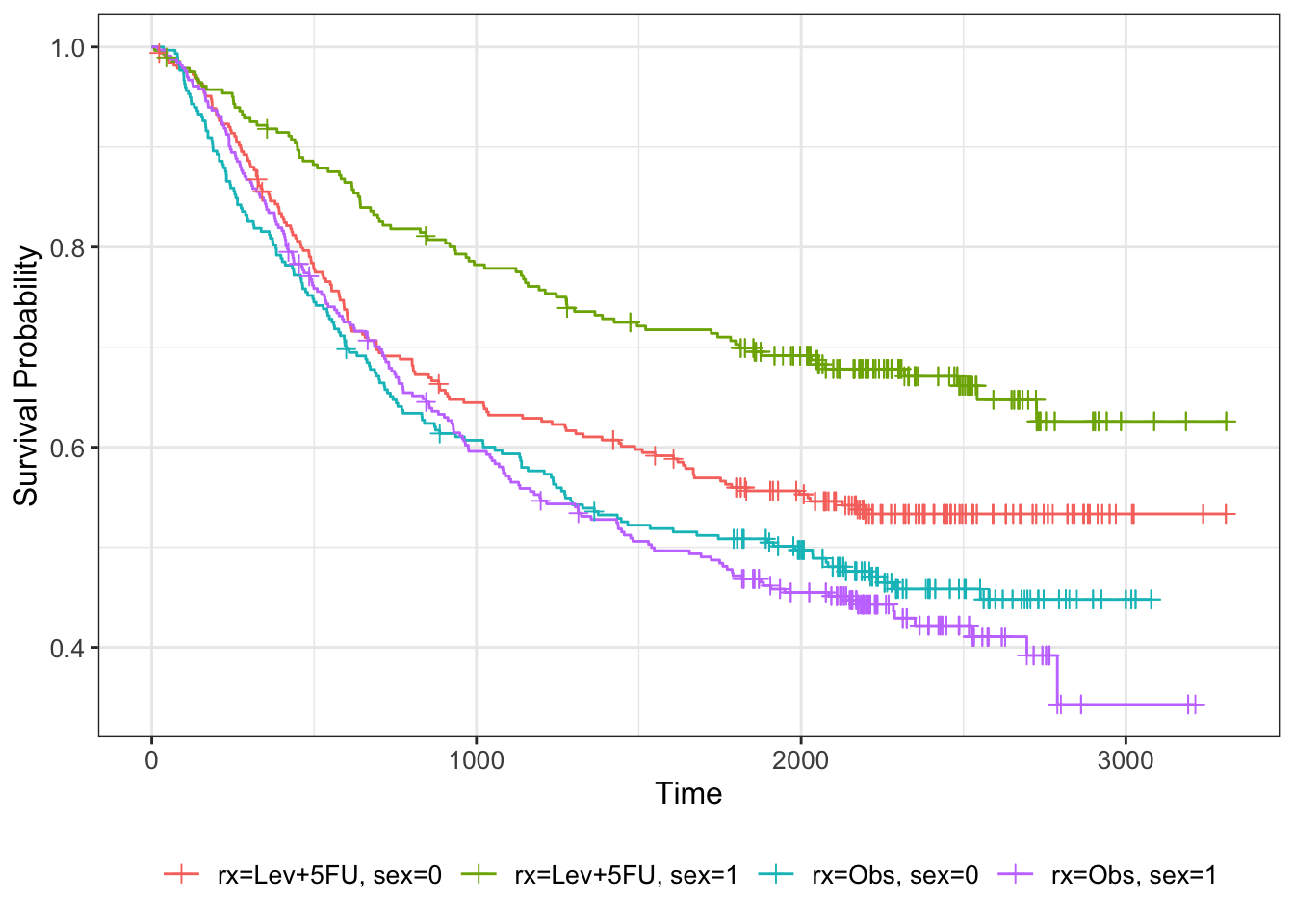

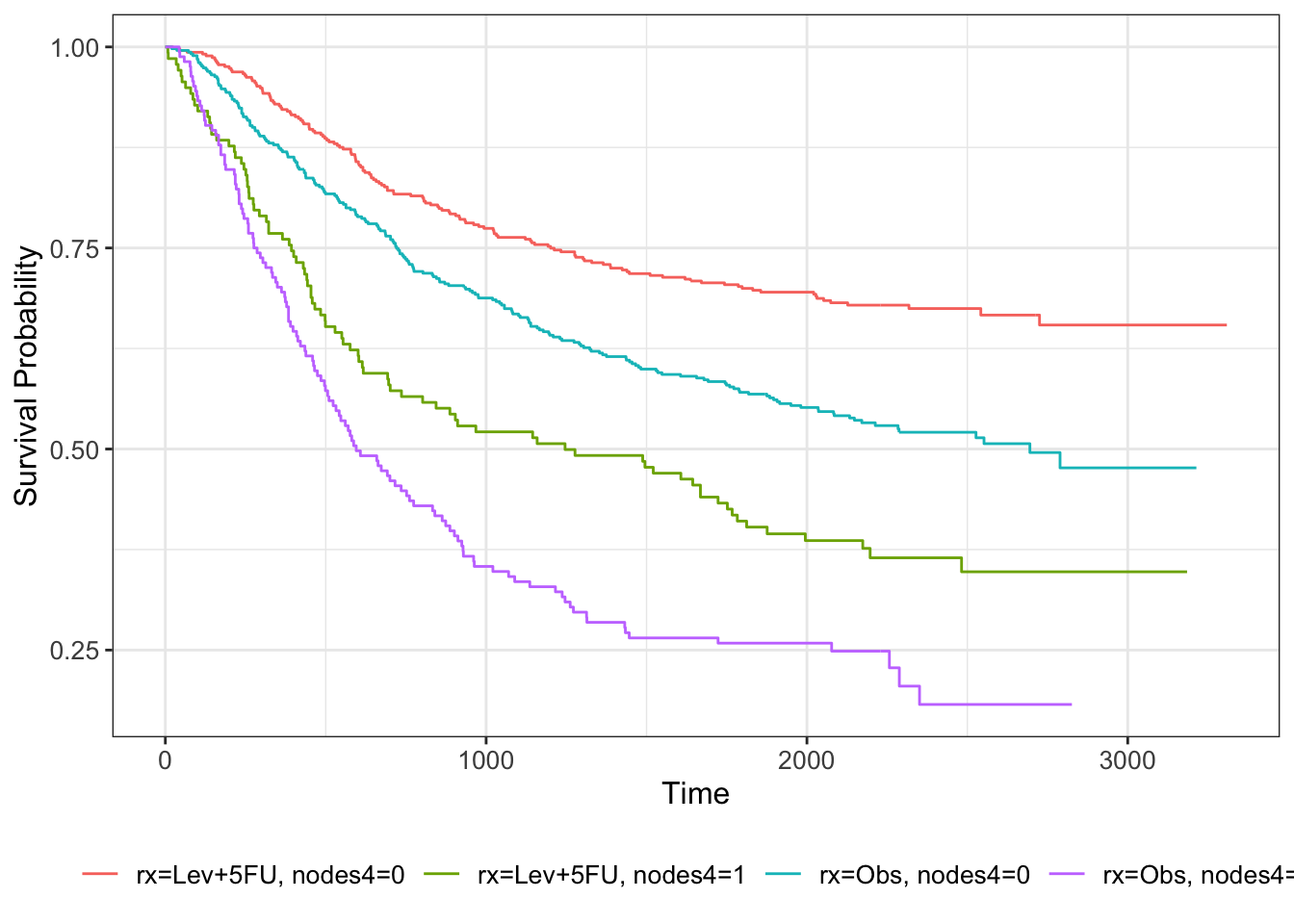

We can visualise this by further subsetting the Kaplan-Meier estimator

km_colon_bl = survfit(Surv(time, status) ~ rx + sex, data=colondf)

km_colon_bl %>% ggsurvfit() +

add_censor_mark()

nodes is a numeric output, so one way to get a visual impression of its effect on the survival curve is to bin it. For example, we can choose \(\texttt{nodes}\leq 4\) and \(\texttt{nodes}>4\).

colondf$nodes4 = sapply(1:nrow(colondf), function(i){ifelse(colondf$nodes[i]>4, 1, 0)})

km_colon_bl = survfit(Surv(time, status) ~ rx + nodes4, data=colondf)

km_colon_bl %>% ggsurvfit()

The separation in the lines fits with the strong effect of nodes in the model.

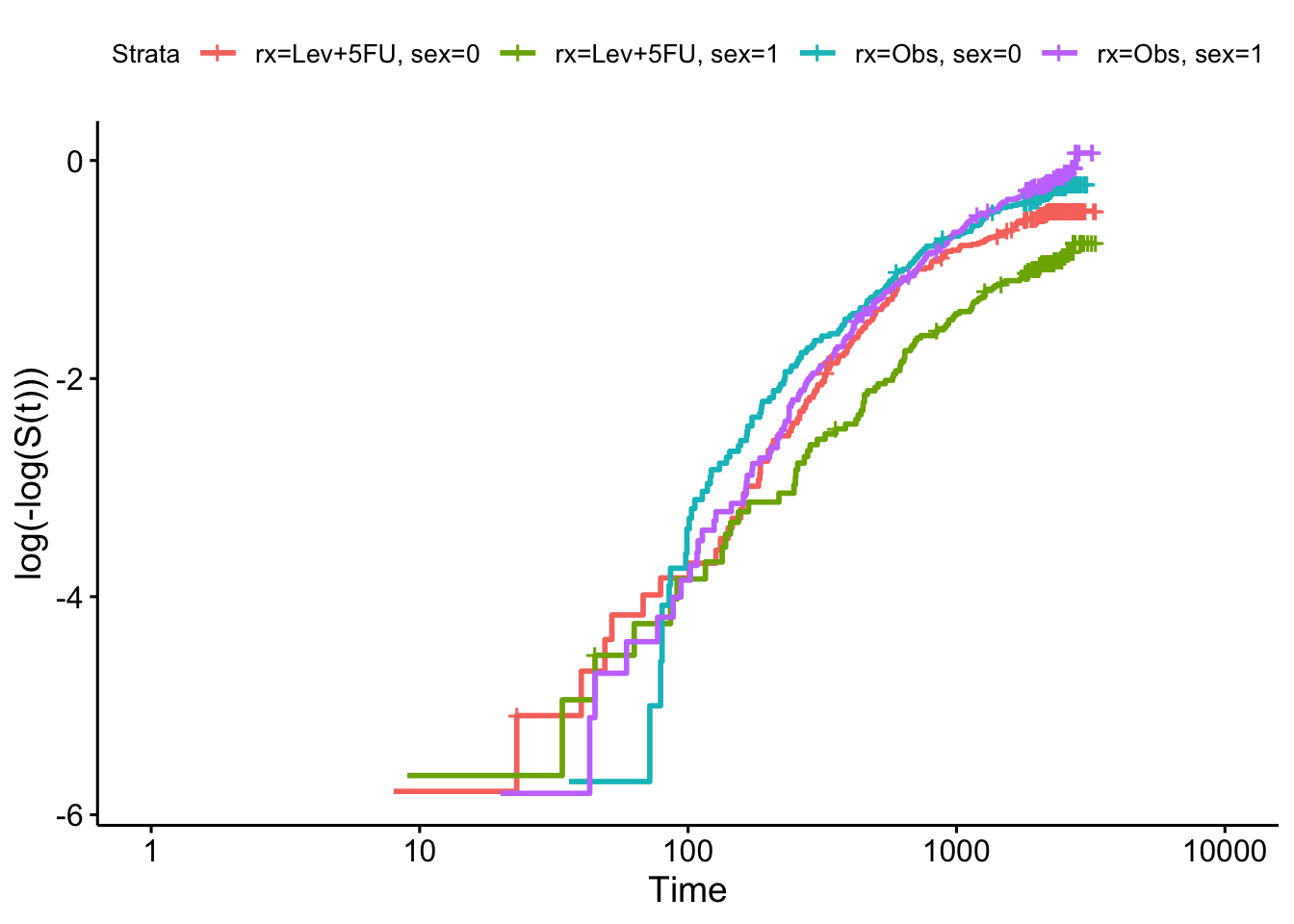

To check our model more carefully we can use the log-log plot for the categorical variables:

km_colon = survfit(Surv(time, status) ~ rx + sex, data=colondf)

ggsurvplot(km_colon, fun = "cloglog")

and the Schoenfeld residuals for the continuous residuals (after removing the insignificant variables):

cox_colon = coxph(formula = Surv(time, status)~rx + sex + nodes, data=colondf)

ggcoxzph(cox.zph(cox_colon), var = "nodes")

and we see that there is insufficient evidence to reject the null hypothesis (of proportional hazards).

D.3 Extension problem: sample size by simulation (part II)

We now combine the work we’ve done, to implement power simulation for a survival outcome trial.

To simulate survival / time-to-event data as though from a clinical trial, we need to consider two things:

- The distribution of the observation/event times (if everyone were observed perfectly)

- The censoring mechanism

For the first, we are likely to use a probability distribution like the exponential (which we saw in Section 7.2.2) or the Weibull (which we looked at briefly in Section 7.2.3).

For the second, there are two aspects:

- Anyone who is still alive (or hasn’t experienced the event) by the end of the trial will be censored at that point

- There will be some censoring during the lifetime of the trial, and this should also be randomly generated.

In this practical we’ll use an Exponential distribution. If you want to read more about this approach, you can read Jiang et al. (2012), who use a Weibull distribution.

D.3.1 Minimum detectable effect size

One of the first practical issues we need to tackle is the concept of the difference between the treatment and control group outcomes, and the idea of the minimum difference we want to be able to detect. Ideally this should be something clinically meaningful. We also need to work out how to factor this into the simulation.

Adapting the approach followed by Jiang et al. (2012), our steps will be:

- Specify the median survival time for the control and treatment groups, \(M_C\) and \(M_T\). We’re assuming that ‘the event’ is bad, and so we’ll assume \(M_T>M_C\). This is how the MDES concept enters the simulation, so \(M_T\) should be the smallest value of \(M_T\) we want to be able to detect (ie. creating the smallest clinically worthwhile improvement from \(M_C\), which we assume to be well understood).

- Use \(M_C,\,M_T\) to find the parameters \(\lambda_C,\,\lambda_T\), which then give us our observation time distribution function for each group.

D.3.2 Censoring

Censoring will happen in two ways:

- An endpoint \(T_{max}\) is specified as part of the trial design, and any observation after \(T_{max}\) is censored at \(T_{max}\)

- Every simulation is censored with a specified drop-out probability \(\pi\), using a Bernoulli distribution. The value of the censored observation is kept at the value simulated from the exponential distribution.

There are other ways to model drop-out, but this relatively simple method works nicely.

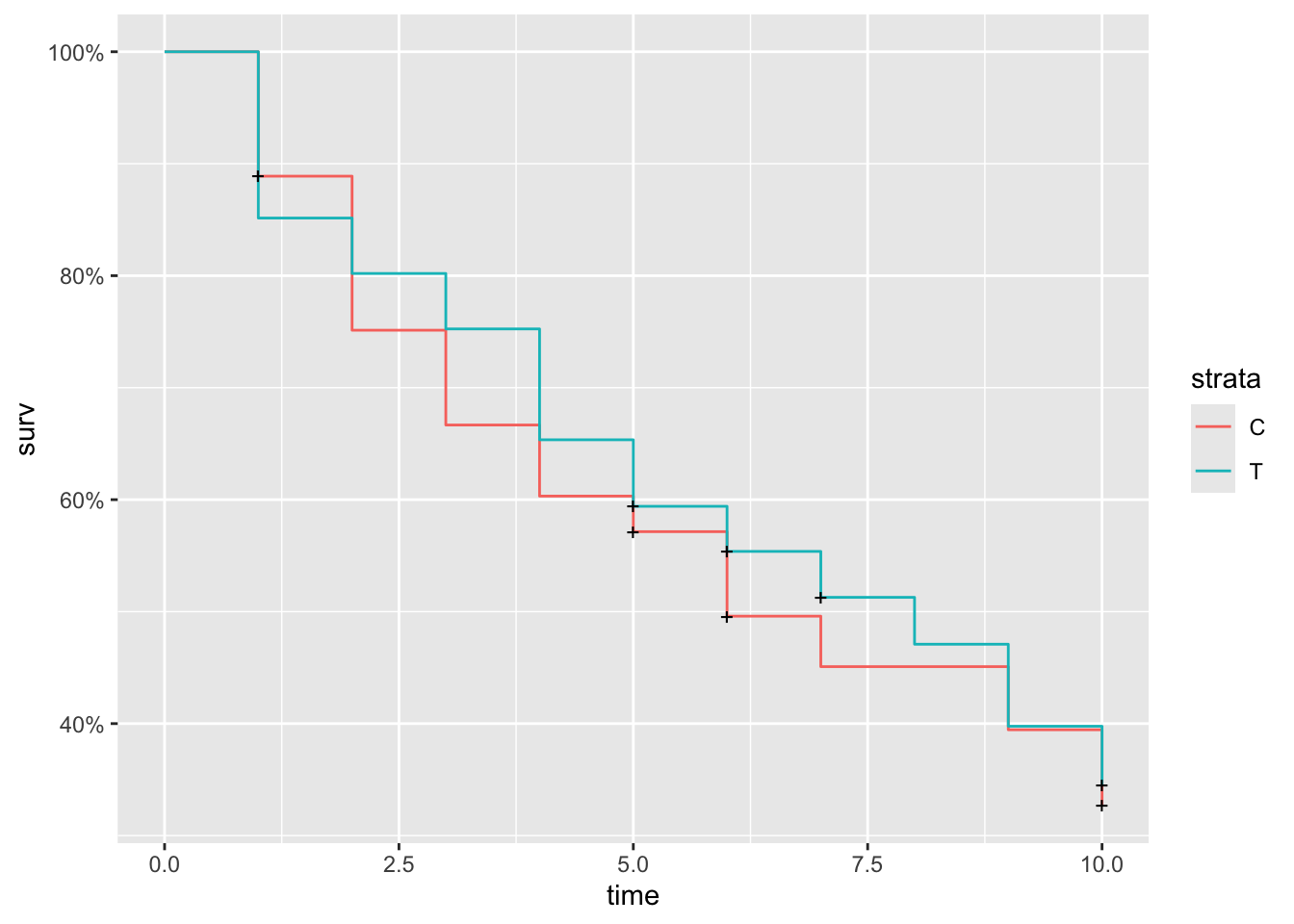

Exercise D.12 Write code to simulate a survival trial dataset as described above. Using this function, simulate data for a trial where \(M_T=7,\,M_C=5,\,\pi=0.1, t_{max}=10\), with approximately 100 participants in each group.

- Plot the Kaplan-Meier estimated survival curve of your data

- What are some of the modelling assumptions/simplifications that have been made?

Some technical things to note:

- For the

Survfunction to interpret the time and status as right censored, it seems that the status variable has to be numeric (not factor, which would seem more logical)

Click for hint 1 (about finding \(\lambda_C,\,\lambda_T\))

The CDF of the exponential distribution is

\[ P\left(X \leq x\right) = 1 - e^{-\lambda x}.\] You can find the median value of \(x\) by setting the CDF to 0.5, which gives a median value of

\[\frac{\ln{2}}{\lambda}.\]

Click for hint 2 (about setting up the simulated data)

Your simulated participant data frame should have three columns (they don’t need to have these exact names):

- Time

- Status (censored = 0, complete = 1)

- Group (Control or Treatment)

Click for solution

Solution. As we did in Section C we’ll write functions so that we can do all of this lots of times. Firstly, we rearrange the median equation in the hint above to find the \(\lambda_T,\,\lambda_C\):

\[\lambda_X = \frac{\ln2}{M_X},\] where \(X\) is \(C\) or \(T\).

Therefore we have \(\lambda_C = 0.139,\,\lambda_T = 0.099\).

## THIS IS DEFNITELY NOT GIVING THE RIGHT DATA!

surv_data = function(

npart, # number of participants per group (roughly)

MC, # median survival time - group C

MT, # median survival time.- group T

p_cens, # probability of censoring for each observation

t_max # end point of trial

){

rateC = log(2)/(MC)

rateT = log(2)/(MT)

dat_mat = matrix(NA, nrow=2*npart, ncol=3)

dat_df = as.data.frame(dat_mat)

names(dat_df) = c("time", "status", "group")

for (i in 1:(2*npart)){

# Using SRS here, but could use something else

group_i = sample(c("C", "T"), size=1)

# Generate time accordingly

if (group_i == "C"){

time_i = round(rexp(1, rate = rateC))

} else if (group_i == "T"){

time_i = round(rexp(1, rate = rateT))

}

# Determine whether observation i is censored

status_i = sample(c(0,1), size=1, prob = c(p_cens, 1-p_cens))

if(time_i>t_max){

time_i = t_max

status_i = 0

}

# Having a zero time observation messes things up so we fudge it slightly

if(time_i == 0){

time_i = 1

status_i = 1

}

dat_df[i,] = c(time_i, status_i, group_i)

}

# Round to 0 dp for a hint of reality

dat_df$time = as.numeric(dat_df$time)

dat_df$status = as.numeric(dat_df$status)

dat_df$group = as.factor(dat_df$group)

return(dat_df)

}We can use the function to simulate the data described above

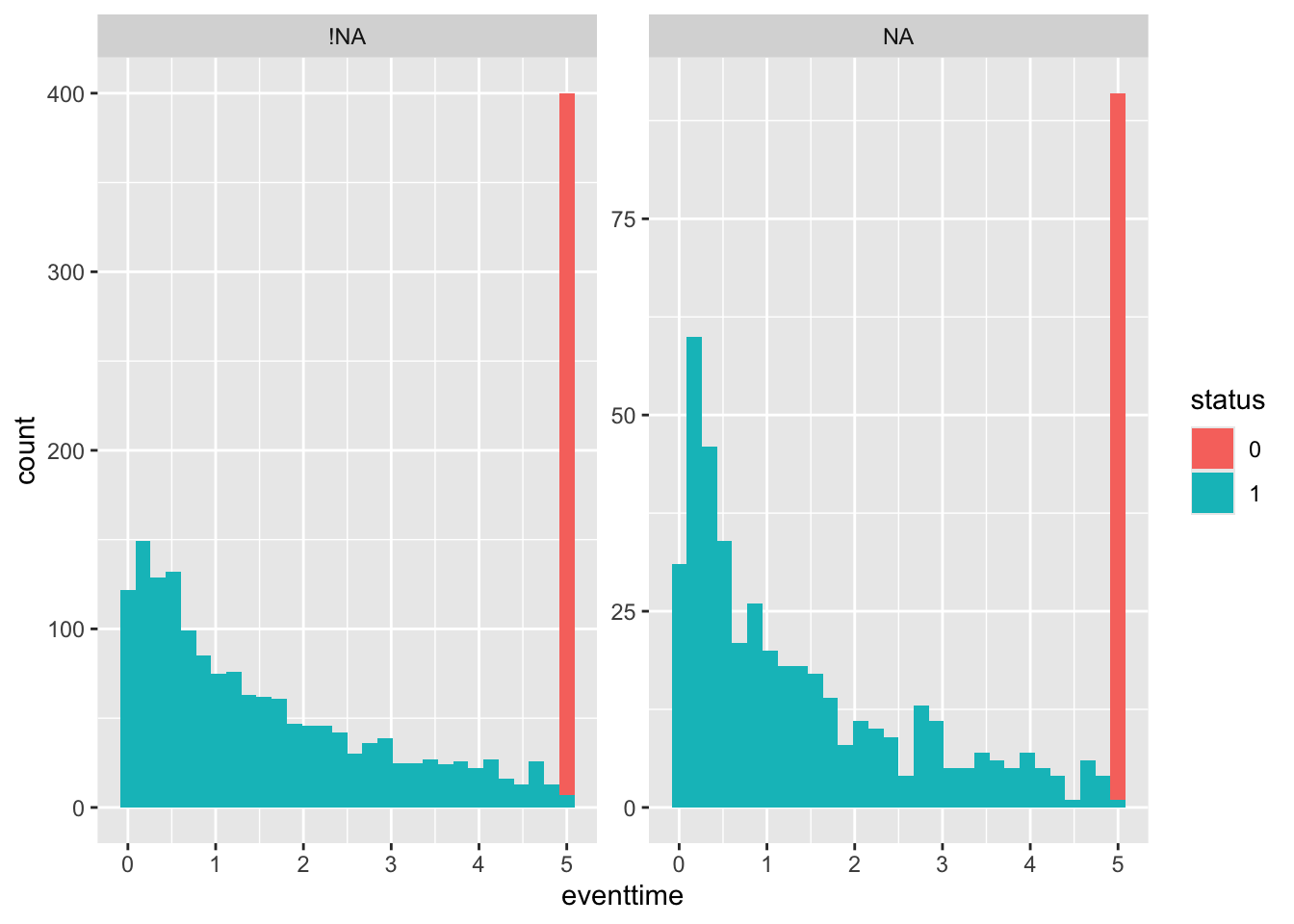

surv_df1 = surv_data(npart=100, MC=5, MT=7, p_cens=0.1, t_max=10)

with(data = surv_df1, Surv(time, status, type = "right"))## [1] 3 1 10+ 3 1 10 10+ 10+ 10+ 6 9 6+ 3 2 9 5 1 10+ 2 1 1 10+ 6 1

## [25] 9 5 4 8 8 10+ 6 6 3 10+ 1 10+ 4 2 2 1 6+ 5+ 10+ 4 9 9 10+ 3

## [49] 1 10+ 4 10 10+ 9 6 6 2 10 4 7 10 2 1 1 7 1 2 10+ 2 1 10+ 1

## [73] 10+ 4 10 2 5+ 10+ 9 3 1 6 2 10+ 10+ 10+ 7 10+ 4 10+ 3 10 5 3 10+ 3

## [97] 10+ 9 1+ 1 10+ 3 1 10+ 1 10+ 2 1 1+ 10+ 10+ 1 2 10+ 10+ 10+ 4 10 10+ 10+

## [121] 1 8 3 10 4 1 10+ 10+ 4 7 1 1 7 10+ 4 2 10+ 5 3 10+ 5 9 10 10+

## [145] 2 4 6+ 5 10+ 10+ 10+ 3 6 5 10+ 10+ 10+ 10+ 9 10+ 10+ 1+ 10+ 6 2 10+ 4 10+

## [169] 10+ 10+ 10+ 7 7 7 9 10+ 2 5 10+ 4 1 10+ 5 10+ 2 2 8 6 1 9 6 10

## [193] 10+ 1+ 10 4 4 10+ 7+ 10+and plot the estimated survival curve

In this sampling scheme it seems it’s common to get some very low values, which is probably quite unrealistic.

Some other assumptions / simplifications we’re making are:

- Censorings during the trial are completely at random (simulated via a Bernoulli distribution). In reality this is very unlikely; it’s far more likely that people become lost to follow-up for a reason, and that within the dataset the instances of this are not independent.

- Censored data keep the same time as was generated by the exponential distribution. This will slightly skew our results.

Having set up a function to simulate data for one trial, we can run it many times to estimate the power of this trial set up. The extra pieces of information we need for this are:

- The significance level \(\alpha\) - we will assume \(\alpha = 0.05\)

- The test to be used - we will use the log rank test (as in Section D.2.2.2).

Exercise D.13 Using the function you wrote in Exercise D.12 and the log-rank test, write a function to estimate the power of a trial set-up.

Assuming the same parameters as in Exercise D.12 and a significance level of \(\alpha=0.05\):

- Estimate the number of participants you need per trial group to achieve a power of 80%.

- Suppose the clinicians decided to extend the trial so that \(t_{max}=20\). How many participants do you now need (approxiamtely) to achieve a power of 80%?

Click for solution

Solution.

surv_sim = function(

nsim, # number of simulations to run

alpha, # the significance level of the test

npart, # number of participants per group (roughly)

MC, # median survival time - group C

MT, # median survival time.- group T

p_cens, # probability of censoring for each observation

t_max # end point of trial

){

H0_reject_vec = rep(NA, nsim)

for (i in 1:nsim){

df_i = surv_data(npart=npart, MC=MC, MT=MT, p_cens=p_cens, t_max=t_max)

log_rank_i = survdiff(Surv(time, status) ~ group, data=df_i, rho=0)

p_val = log_rank_i$pvalue

H0_reject_vec[i]= ifelse(p_val < alpha, 1, 0)

}

power.est = mean(H0_reject_vec)

power.est

}We can now use this to find the sample size we need by experimenting with some values.

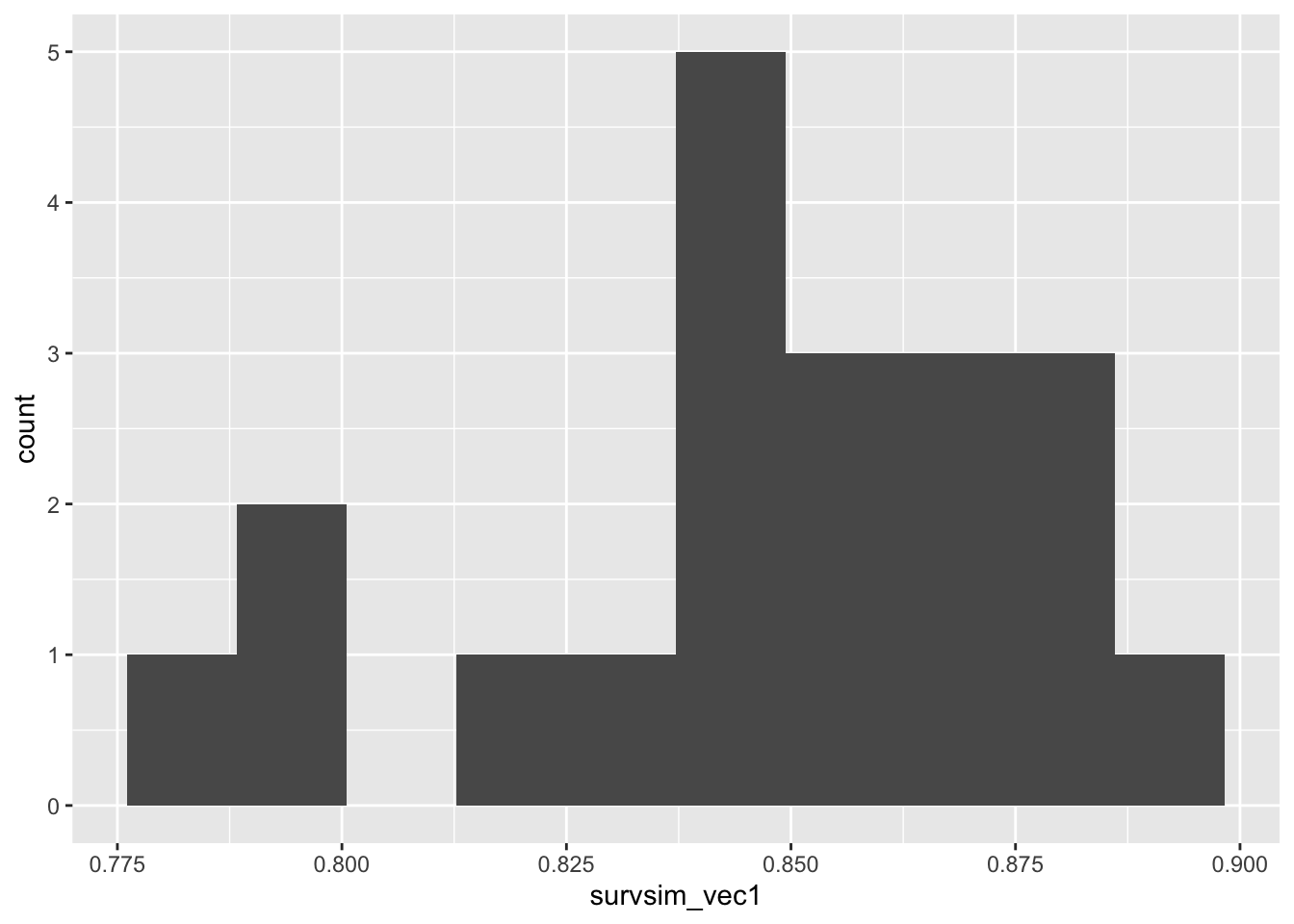

## [1] 0.82survsim_vec1 = rep(NA, 20)

for (i in 1:20){

survsim_vec1[i] = surv_sim(nsim=100, alpha=0.05, npart=250,MC=5, MT=7, p_cens=0.1, t_max=10)

}

ggplot(mapping = aes(survsim_vec1)) + geom_histogram(bins=10)

If we extend the trial duration to \(t_{max}=20\), the power given by a particular number of participants is now larger, because there are fewer censored observations and therefore more information. Some more experimentation with sample size would enable us to find a suitable number for this new scenario.

There are of course lots of ways we could refine and extend this, many of them similar to what we discussed in Section C, for example:

- Running more simulations (100 is really not enough, but was chosen so that the file would compile - feel free to increase it)

- Incorporating a better sampling scheme than SRS

- Improving the drop-out / censoring modelling

- Allowing the shape parameter to vary (though being careful of the implications on analysis)